What does it actually take to ship a product where AI operates in the physical world? Where do most teams get stuck? And what should you realistically expect?

This article addresses the questions we hear most often from engineering leaders navigating this space without all the hype.

First, Let’s Define The Thing

Physical AI lives at the intersection of software, hardware, and the real world.

These systems take in signals from cameras, lidar, force sensors, microphones, and other inputs. They interpret those signals, make decisions, and drive actuators — motors, servos, control surfaces, braking systems — in real time. And they do it within hard constraints: millisecond-level latency budgets, limited power, strict safety requirements, and environments that don’t behave predictably.

In this domain, the output isn’t a stream of tokens or a ranked list. It’s a trajectory adjustment. A torque command. A safety interlock. A timing decision that determines whether a device responds correctly or fails under pressure. When you’re working in robotics, medical systems, or autonomous platforms, the consequences of being wrong are physical and immediate.

That reality changes how these systems need to be engineered. Physical AI demands tight integration across embedded software, control systems, sensor fusion, networking, and validation. It requires hardware-in-the-loop testing, rigorous QA, and architectures built for determinism and fault tolerance. As we’ve seen in mission-critical robotics and medical platforms, success depends on treating the system as a unified whole — not a collection of loosely connected components.

This is layered, multidisciplinary engineering. The physics matter. The timing matters. The edge cases matter. And solving it takes more than plugging into an API. It takes systems thinking from the ground up.

Q1: What Makes Physical AI So Much Harder Than Software AI?

The honest answer: you can’t just iterate in production.

In software AI, if your recommendation model makes a bad prediction, a user gets a weird movie suggestion. You log it, retrain it, and redeploy it. With Physical AI, a bad prediction can mean a damaged product, a safety incident, or a failed mission. The feedback loop is slower, the stakes are higher, and the environments are inherently unpredictable.

A few specific factors compound the difficulty:

Real-time constraints are non-negotiable. If a robot arm’s control loop has 200ms of latency where it should have 5ms, the system doesn’t just perform poorly, it can be dangerous. Efficient, domain-specific inference at the edge isn’t a nice-to-have. It’s a hard requirement that shapes your entire architecture from day one.

Environments don’t cooperate. Dust. Vibration. Temperature swings. Occlusion. Reflective surfaces. Lighting shifts. The physical world routinely breaks the assumptions models are trained on. Teams often underestimate how much edge-case coverage it takes before a system is truly ready for deployment.

Hardware, firmware, and software are co-dependencies. In many traditional software domains, hardware details are abstracted through well-defined layers. In Physical AI, the sensor quality, the processor architecture, the communication bus, and the software stack are deeply coupled. A decision made at the embedded firmware level can propagate into fundamental constraints on what your ML models can do and vice versa.

Q2: We Have an AI Model. Why Is It Failing in the Real World?

This is one of the most common and painful questions engineering leaders face after a promising prototype hits production.

The usual culprit is training data distribution mismatch. The model was trained on data that doesn’t reflect the actual conditions of deployment. Real-world data is messy, rare scenarios are chronically underrepresented, and some edge cases are practically impossible to capture through field data collection alone.

The sim-to-real gap is a related and well-documented problem. A robot trained entirely in simulation often fails when deployed because the simulation wasn’t physically accurate enough. If the 3D geometry, material properties, lighting conditions, or physics don’t match reality closely enough, the model learns behaviors that simply don’t generalize.

The approaches that serious teams are converging on:

Physics-based simulation for training. Building virtual environments from real physical models, not simplified or visually polished stand-ins. This is fundamentally different from generative synthetic data. The goal is fidelity to physical laws and system dynamics, not just something that looks realistic on screen.

Targeted edge-case data generation. Instead of generating data evenly across scenarios, deliberately targeting failure modes, edge cases, and environmental conditions that are difficult or impractical to capture through real-world collection.

Continuous data pipelines from deployed systems back into the training loop, so the model improves from real-world exposure over time.

By the time your model is failing in the field, you have a data problem that started much earlier in your development process.

Q3: How Do We Architect for Safety When AI Is Making Real-Time Decisions?

This question doesn’t have a single answer, and any vendor or consultant who tells you it does should be treated with skepticism. But there are architectural principles that mature teams consistently apply.

Layered safety is not optional. AI-driven decisions should sit inside an architecture that includes deterministic safety layers including hard-coded limits, sensor cross-checks, watchdog timers, and clear manual override paths. The AI determines the optimal action; the safety framework ensures that only actions within defined physical and operational boundaries can actually be executed.

Fail-safe states need to be designed in from the beginning. How does the system respond when the model is uncertain? When a sensor delivers invalid or degraded data? When an action would push the platform beyond a safety threshold?

These aren’t edge cases to handle later. They’re core design requirements, shaping hardware selection, firmware architecture, fault handling, and overall state management from the start.

Certification requirements are almost always underestimated. Depending on your domain — medical devices, aerospace, industrial automation, autonomous vehicles — regulatory frameworks like FDA guidance, DO-178C, IEC 61508, and ISO 26262 impose specific requirements on how AI-driven decisions are validated. Retrofitting compliance into a system that wasn’t designed with it in mind is one of the most expensive mistakes a product team can make.

Safety in Physical AI is an architectural discipline, not a feature you add before shipping.

Q4: What Does a Realistic Development Timeline Look Like?

Timelines are often misjudged here — especially by teams accustomed to how quickly software-only AI products can move.

Physical AI development has timeline dependencies that pure software projects simply don’t:

Hardware iteration cycles are slow. PCB revisions, mechanical redesigns, sensor procurement lead times don’t move at sprint velocity. If your software team outpaces hardware readiness, you accumulate integration risk that surfaces late and expensively.

Integration testing surfaces problems that unit testing doesn’t. A sensor fusion algorithm that works perfectly against recorded data can behave unexpectedly when connected to real sensors in a real mechanical assembly. The integration phase for Physical AI systems is typically longer and more unpredictable than teams plan for.

Safety validation takes time that can’t be compressed. Particularly for safety-critical applications, validation testing against defined failure modes is not a checkbox. It’s an extended process that may require third-party certification.

A practical rule of thumb: take your most optimistic timeline and add 30–40% for integration and safety validation. Then build in additional margin for sensor behavior, calibration drift, and data pipeline issues because they will surface. This isn’t pessimism; it’s pattern recognition. In Physical AI, integration exposes realities that don’t show up in controlled demos. Safety validation takes longer than expected. Hardware and data rarely behave perfectly the first time.

Teams that dismiss this buffer as overly conservative tend to slip schedules. Teams that plan for it as a matter of discipline are the ones that ship.

Q5: Should We Build a Custom Model or Use a Foundation Model?

The answer is almost always “both, in different layers of your stack,” but the question reflects an important framing issue.

Foundation models (including emerging Physical AI platforms like NVIDIA’s Cosmos) provide broad world understanding. They can help with perception, semantic reasoning, and natural language interfaces. But they are not optimized for domain-specific, low-latency control tasks.

The architecture that tends to work looks like this:

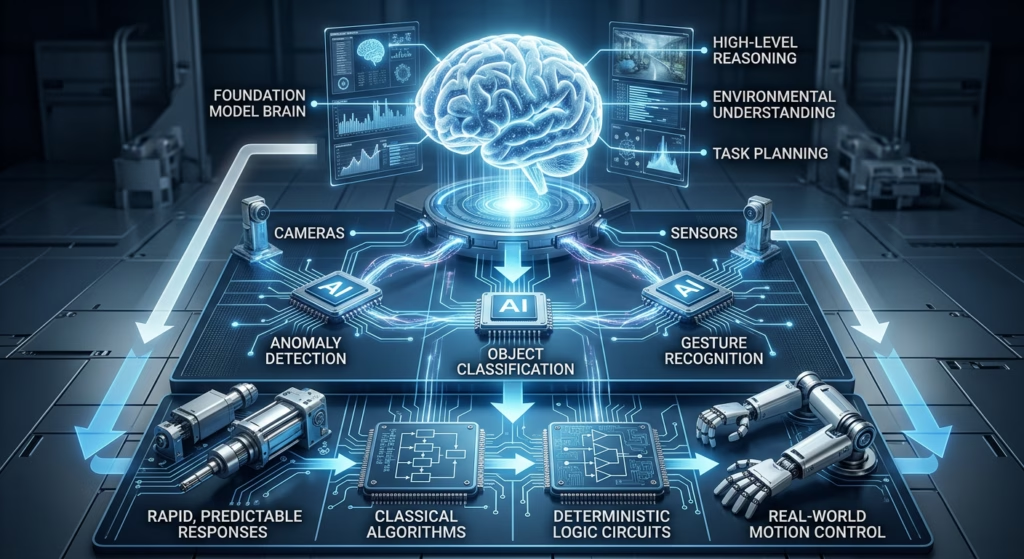

Foundation models handle high-level reasoning, environmental understanding, and task planning. Fine-tuned or purpose-built models handle domain-specific perception tasks like anomaly detection on a production line, object classification in a specific environment, gesture recognition for a specific user population. Classical control algorithms and deterministic logic handle low-level actuation, where latency and predictability are paramount.

Where teams tend to get into trouble is at the extremes.

On one end, applying a general-purpose model to a problem that demands deterministic, real-time, domain-specific inference at the edge where latency, power constraints, and safety requirements leave little room for abstraction.

On the other, investing months building a custom model from scratch for a task that a well-adapted foundation model could solve with a fraction of the effort.

Both mistakes stem from the same issue: misalignment between the problem’s operational constraints and the model strategy. The right choice addresses what fits the system, the environment, and the performance envelope you actually have to operate within.

Q6: We’re Struggling to Get Enough Training Data. What Do Teams Do?

Data scarcity is consistently one of the top practical blockers for Physical AI development, and it compounds in ways that aren’t always obvious early on.

Real-world data collection for physical systems is expensive, slow, sometimes impossible (how do you capture training data for a rare failure mode without inducing the failure?), and often comes with privacy or safety constraints that limit what you can gather and retain.

The strategies gaining serious traction:

Physics-based synthetic data. Simulated environments built on real physical models can generate massive, accurately-labeled datasets at a fraction of the cost and time of field collection. The critical qualifier is “physics-based” where the simulation accurately models materials, lighting, dynamics, and sensor behavior. Purely visual synthetic data often fails to transfer to real-world performance even when it looks convincing.

Domain randomization. Systematically varying simulation parameters — lighting, object placement, surface properties, sensor noise profiles — to expose the model to controlled variability before it encounters it in the field.

The goal isn’t randomness for its own sake. It’s building resilience so the model maintains performance when real-world conditions shift outside the narrow band seen in initial data collection.

Targeted edge-case generation.Instead of distributing synthetic data evenly across scenarios, focus it on the gaps — failure modes, edge conditions, and low-frequency events that real-world collection rarely captures in sufficient volume.

The objective is targeted coverage: systematically reinforcing the situations most likely to expose weaknesses, rather than overtraining on the conditions the system already handles well.

One critical distinction to understand: there is a meaningful difference between photorealistic synthetic data and physically accurate synthetic data. Realism in appearance doesn’t guarantee transfer to real-world performance. Teams that conflate the two often find their synthetically-trained models performing well in simulation but struggling the moment they’re deployed.

Q7: How Do We Manage the Integration Complexity?

This is where many Physical AI projects slow to a crawl, particularly for teams whose engineering culture was built around cloud-native software.

Physical AI systems typically span a stack that includes embedded firmware on microcontrollers or specialized processors, middleware that abstracts hardware and manages real-time data, edge compute running inference and control logic, cloud or backend systems for model updates and fleet management, and APIs and protocols connecting all of it.

Each layer has its own tooling, performance requirements, and failure modes. Engineers with expertise in one layer often have limited experience with adjacent ones. And the interfaces between layers, particularly between embedded/firmware and the software above it, are where the hardest integration problems live.

Practical approaches that tend to work:

Invest early in hardware abstraction layers that let software teams work against well-defined interfaces rather than specific hardware implementations. This pays dividends throughout the entire development lifecycle.

Use frameworks built for robotics, like ROS2, rather than adapting web or cloud frameworks to a problem domain they weren’t designed for.

Treat sensor fusion as a first-class architectural concern, not an afterthought. How you combine, validate, and weight data from multiple sensors fundamentally affects the reliability of every AI decision downstream.

Define the cloud/edge split early. What must happen locally due to latency or connectivity requirements? What can happen in the cloud? Getting this wrong late in development can require significant, painful architectural rework.

Q8: How Do We Know When Our System Is Actually Ready to Deploy?

This might be the question with the biggest gap between what teams wish was true and what actually holds up.

“It worked in testing” is not sufficient. The relevant questions are:

What failure modes have we validated? Not just that the system works under normal conditions, but that it fails gracefully, predictably, and safely when sensors degrade, inputs fall outside the training distribution, or environmental conditions change.

Have we tested across the actual distribution of real-world conditions? Temperature ranges, lighting variations, vibration environments, network conditions, power supply fluctuations, whatever the deployment context imposes.

Can we observe the system in production? Observability for Physical AI means more than application logging. It means tracking model confidence, sensor health, actuation performance, and anomaly patterns over time. Without this, you’re operating blind in a system where blind spots can be consequential.

Do we have a rollback and override path? For any autonomous system making consequential decisions, the ability to intervene, override, or revert to a known-safe state is non-negotiable.

Teams that deploy successfully define readiness across multiple dimensions — performance, safety, latency, fault tolerance, environmental robustness — each with explicit, testable criteria.

Readiness isn’t a feeling. It’s a set of conditions the system can demonstrably meet under real operating constraints.

The Bottom Line

Physical AI is hard because system level constraints compound across the stack. Sampling rates affect feature quality. Memory bandwidth affects model selection. Bus latency affects control stability. Safety requirements constrain the action space. Assumptions that hold in offline evaluation often fail under real time conditions.

The challenge is deploying models inside tightly coupled systems with deterministic timing, noisy sensors, limited compute, and strict safety boundaries. Model performance alone does not determine success. System performance does.

Teams that succeed treat this as a systems problem from the start. They account for hardware constraints, inference latency, fault handling, and safety architecture early. They invest in high fidelity simulation and physics-based data to close coverage gaps before deployment. They define readiness with explicit, testable criteria across performance, safety, and robustness.

Teams that struggle underestimate integration complexity, delay safety planning, mistake visual realism for physical accuracy in training data, or apply software only AI patterns to a domain that does not support them.

Physical AI is already creating value across robotics, autonomous vehicles, industrial automation, and healthcare. Capturing that value requires engineering leadership that understands clearly and honestly what is being built and what it takes to deploy it responsibly.