Edge AI is transforming how we build intelligent systems. From smart cameras to wearable devices, the ability to process visual information directly on hardware—without sending data to the cloud—opens up applications that were impossible just a few years ago. But there’s a significant gap between running an AI model on a server and deploying it effectively on resource-constrained edge devices.

What Makes Edge AI Different?

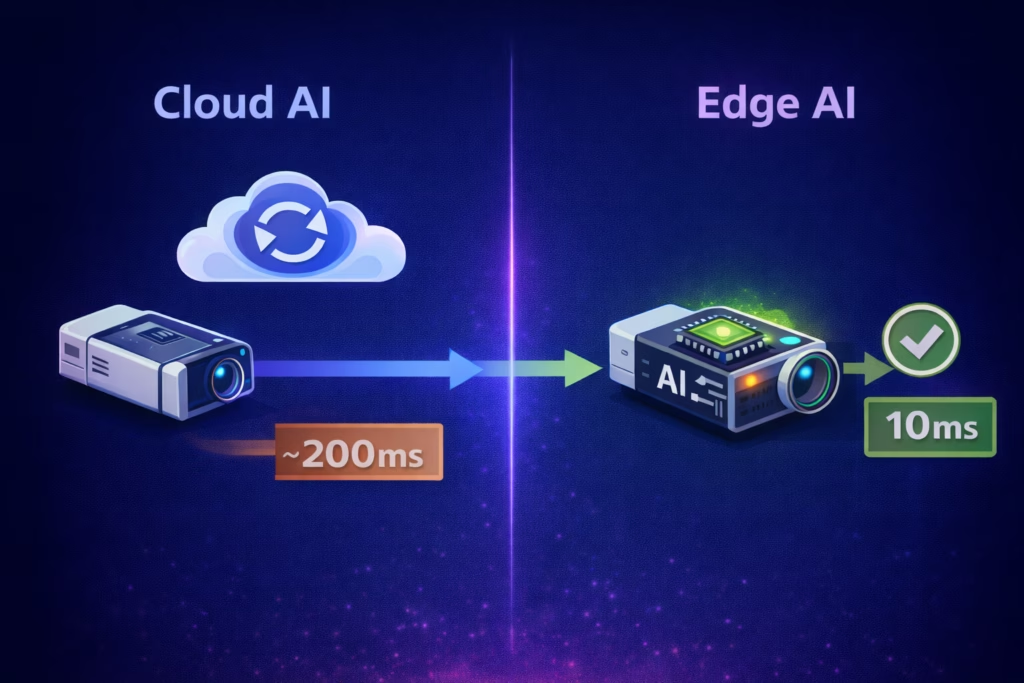

Traditional cloud-based AI systems send data to centralized servers for processing. For example, when a security camera detects motion and sends video to the cloud for analysis, that video must travel across the internet to a data center where powerful GPUs process it before sending results back. This centralized approach works well for many applications, but it has limitations:

- Latency: Round-trip network communication adds delays

- Bandwidth: Sending raw video frames (potentially 30 per second) creates enormous data overhead

- Connectivity: Many applications need to function offline or in areas with poor network access

- Cost: Centralized processing means ongoing server infrastructure costs

- Privacy & Security: Sensitive data must leave the device

Edge AI solves these problems by performing inference directly on the device. According to Cisco, edge AI processes data locally to reduce latency, enhance privacy and security, and minimize bandwidth requirements—enabling real-time decision-making without continuous cloud connectivity. Your smartphone already does this extensively—many of the AI features you use daily run locally, preserving battery life and privacy while delivering instant results.

The Vision Processing Sweet Spot

Vision applications represent one of the strongest use cases for edge AI. As industry research from Viso.ai highlights, edge computing is essential to computer vision operations because it improves response time, reduces bandwidth, and enhances security compared to cloud-based approaches. Consider a safety system monitoring a workspace for human presence. Sending raw video streams to a central server for processing is inefficient. Instead, edge AI can:

- Process each frame locally using specialized hardware

- Identify relevant objects (people, specific tools, safety zones)

- Transmit only essential information (coordinates, timestamps, classifications)

- Enable real-time response with minimal latency

This approach reduces a flood of raw camera data to a concise stream of actionable intelligence. The bandwidth savings alone can be transformative when deploying systems at scale.

Training vs. Deployment: Understanding the Divide

One crucial distinction often misunderstood: AI training and AI deployment are fundamentally different processes with vastly different computational requirements.

Training a convolutional neural network for machine vision involves repeatedly iterating thousands or millions of images and adjusting weights across to reward correct inferences and discourage incorrect ones. This tuning process is known as backpropagation. Because of the enormous number of computations involved, high numerical precision is essential to prevent errors from accumulating, so 32-bit floating-point values are used throughout the training process. This is computationally intensive and typically requires significant processing power. According to research from Nebius, training large models like GPT-4 can take 90-100 days on thousands of GPUs, while most vision models still require days or weeks on powerful hardware.

When the model is deployed the inference process works with a single image at a time with far fewer computations, so this high level of precision is no longer required. Because inference, unlike training, is a forward process and doesn’t involve backpropagation, it eliminates special-case computations like division by zero, greatly simplifying the math. Critically, this also means full floating-point precision is no longer required, a fact that edge AI accelerators exploit aggressively. While training requires high upfront computational cost, inference runs continuously with lower per-instance resource requirements, making it practical for specialized, low-power chips.

This is why edge AI focuses exclusively on deployment. The mathematical operations involved in inference are highly parallelizable and amenable to hardware acceleration, making them practical for specialized, low-power chips.

Hardware Acceleration: The Enabler

General-purpose processors can run AI models, but they’re not optimized for the specific mathematical operations neural networks require. Enter AI accelerator chips.

These specialized processors are designed for one thing: efficiently crunching through the matrix multiplications and convolutions that form the backbone of neural network inference. Unlike GPUs, which serve multiple purposes, AI accelerators are purpose-built for this workload. Research published in arXiv’s “Optimizing Edge AI” survey demonstrates how specialized AI accelerators enable efficient neural network inference on resource-constrained edge devices through hardware optimization for specific mathematical operations.

Modern edge AI systems combine a standard IoT processor (often running embedded Linux) with one or more AI accelerator cores. The main processor handles general computing tasks while offloading inference work to the accelerators. This architecture enables sophisticated AI capabilities in battery-powered devices.

A key advantage of edge AI accelerators is their ability to execute many low-precision operations in parallel, effectively replacing a single high-precision computation with multiple efficient lower-precision calculations. This architectural approach significantly increases throughput and performance for AI workloads running at the edge. Where training demands 32-bit floating-point arithmetic, accelerators perform multiple lower-precision operations in the same silicon area, supporting various combinations of 16-bit or 8-bit integers or fixed values, 8-bit or 4-bit floating-point values, and in some architectures even 2-bit or 1-bit integers.

Smaller data types mean more work per transistor, less memory bandwidth, and dramatically reduced power draw. But exploiting this efficiency requires carefully adapting a trained model to those reduced data types: selecting bit widths for each layer, ensuring values don’t overflow, and preventing rounding errors from compounding across computations. Those parameters must then be tuned to the specific architecture of the chosen accelerator chip, a process that has far more in common with embedded software development than with model training.

The Optimization Challenge: A Real-World Example

Consider a wearable device with an integrated camera. The constraints are severe:

- Power: Must run for hours on a small battery

- Speed: Must process frames faster than head movement to avoid lag

- Size: Hardware must fit in a compact form factor

- Cost: Components must be economical at production scale

Now add another wrinkle: the device uses a monochrome camera (perhaps for power or size reasons), but available pre-trained models were trained on color images.

Testing reveals that even in good lighting, switching from color to grayscale causes a 20% drop in detection accuracy. In low light or motion scenarios with blur, performance degrades further. Research from Roboflow confirms that when a task relies on color cues, converting to grayscale can significantly degrade performance, especially under challenging lighting conditions or with motion blur.

The solution isn’t simple. You can’t just apply color-trained models to grayscale data and expect good results. The model needs retraining with grayscale imagery that reflects actual deployment conditions, including various lighting scenarios, motion blur, and other real-world challenges.

This is where optimization expertise becomes critical. It’s not enough to get a model running on the hardware. You need to:

- Retrain or fine-tune models for your specific sensor characteristics

- Optimize inference speed to meet real-time requirements

- Balance accuracy, speed, and power consumption

- Account for edge cases and challenging conditions

- Integrate with existing system architecture

While this efficiency enables AI to operate effectively at the edge, it also introduces new engineering challenges when adapting general-purpose machine learning models for accelerator hardware. Models originally designed for full floating-point computation must be carefully analyzed and calibrated to operate with reduced-precision data types. The objective is to determine the most efficient representation for each stage of the processing pipeline.

For fixed-point implementations, this includes selecting the appropriate allocation of bits between integer and fractional values, ensuring computations remain within range while minimizing cumulative numerical error. Without careful calibration, overflow conditions or precision loss can degrade model performance and detection accuracy relative to the original implementation.

Achieving optimal results requires tuning the model for the specific architecture of the target accelerator. This process demands a detailed system-level understanding of both the hardware and the vendor’s software toolchain, often involving iterative adjustment of numerous optimization parameters and calibration settings to reach the best balance of performance, efficiency, and accuracy.

Achieving the right balance between efficiency and precision is critical for delivering high-performance, high-accuracy AI inference at the edge. While accelerator vendors provide software tools that partially automate calibration, maintaining model accuracy often requires deeper analysis of the model’s internal structure to prevent degradation in detection performance.

This process demands both mathematical rigor and a detailed understanding of the accelerator architecture and its supporting toolchain. As a result, optimizing edge AI deployments remains as much an engineering discipline as it is an art. Rigorous evaluation, profiling, and benchmarking are essential to validate performance and ensure the final system meets product requirements.

In one project, we calibrated an object detection model using the accelerator manufacturer’s toolchain and observed an unexpected limitation: the model accurately detected small objects but consistently failed to recognize objects above a certain size threshold. This behavior can occur because early inference layers extract fine-grained features, while later layers combine those features into higher-level patterns, making large-object detection more sensitive to precision constraints.

Through detailed analysis of the model’s internal processing pipeline, we determined that certain stages required higher numerical precision. By adjusting those layers to use 16-bit fixed-point weights instead of 8-bit values, we restored accurate large-object detection while maintaining the performance benefits of the accelerator. This targeted optimization preserved both inference speed and model accuracy in the final system.

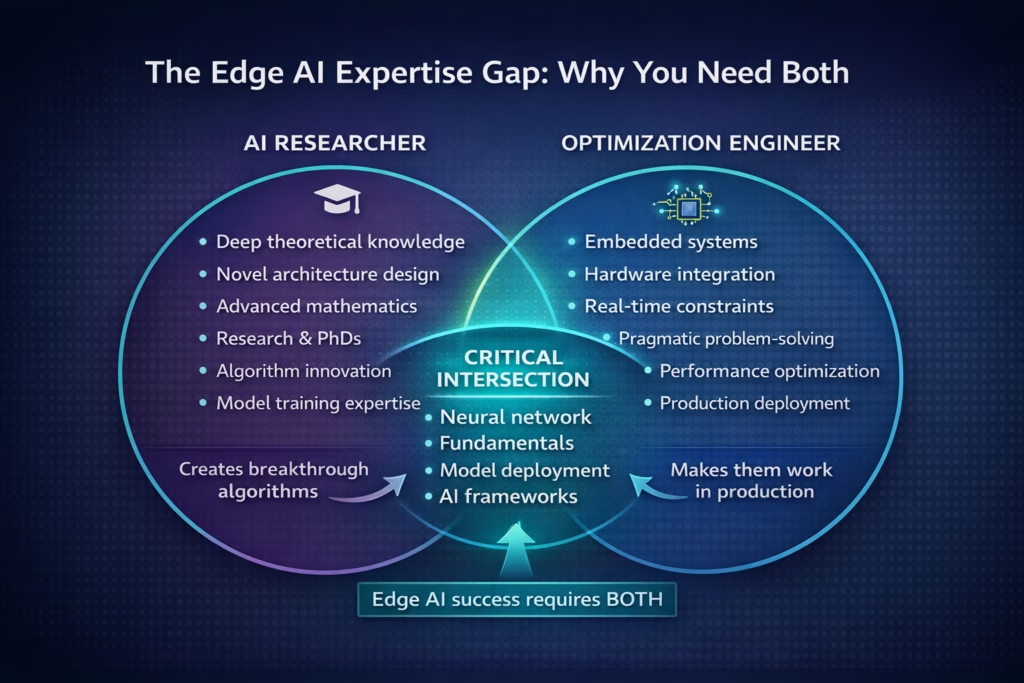

The Expertise Gap

But most real-world applications encounter complications:

- Non-standard sensors or imaging conditions

- Unique performance requirements

- Integration with complex existing systems

- Need for custom models or significant optimization

- Challenging deployment environments

This is where the distinction between AI researchers and AI optimization engineers matters. Developing novel neural network architectures requires deep theoretical knowledge and often advanced degrees. But deploying and optimizing those models for specific hardware platforms requires a different skill set: understanding embedded systems, proficiency with optimization tools, experience with hardware-software integration, and pragmatic engineering problem-solving.

Managing Expectations

One recurring challenge in edge AI projects: clients often underestimate deployment complexity. Modern AI tools have become remarkably accessible—it’s possible to get a basic model running with minimal effort. This accessibility can create unrealistic expectations.

Getting a model to compile and produce output is just the beginning. Production deployment requires:

- Achieving acceptable accuracy under real-world conditions

- Meeting performance targets (frames per second, latency)

- Optimizing for power consumption and thermal constraints

- Handling edge cases and failure modes

- Integrating with broader system functionality

The gap between “it works on my development board” and “it’s ready for production” is substantial. Understanding this upfront prevents disappointment and sets realistic project timelines.

Looking Forward

Edge AI represents a genuine paradigm shift in how we build intelligent systems. The ability to distribute processing to where data originates—rather than centralizing everything—enables applications that were previously impractical or impossible.

But realizing this potential requires more than just access to AI accelerator chips and pre-trained models. It requires expertise in optimization, integration, and the pragmatic engineering work of making sophisticated technology function reliably under real-world constraints. Edge AI solutions lower operating costs through bandwidth reduction, labor efficiency, and asset longevity while enabling faster decision-making at the edge.

As edge AI continues to mature, the companies that succeed will be those that understand not just the AI algorithms themselves, but the full stack of challenges involved in deploying them effectively. The technology is powerful, but engineering matters more than ever.

Want more great insights about Edge AI? Read our article Optimizing Edge AI For Effective Real-Time Decision Making In Robotics – Geisel Software.